Recently, I had a customer go through a merger, and they inherited another StoreOnce located at a remote site. We made the decision to enable Catalyst copy from the customer’s existing StoreOnce to the inherited StoreOnce to enhance the customers backup and recovery strategy. The only issue was the size of the existing StoreOnce Catalyst store was larger than the available capacity on the inherited StoreOnce, which already had the capacity expansion licensed and installed.

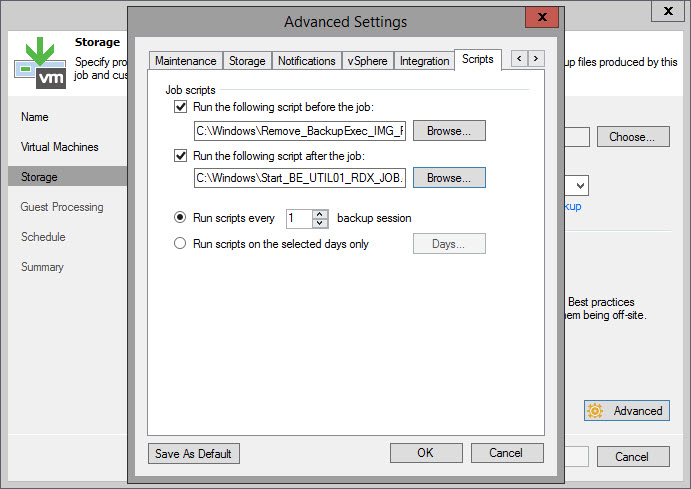

Upon further investigation I discovered that the customer’s Catalyst store had several thousand orphaned Veeam backups from over the years that were no longer present in the VBR database, nor where they picked up by Veeam when rescanning the repository. Deleting these orphaned Veeam files would easily free up enough space in the source Catalyst store to match what was available in the in inherited StoreOnce. All I needed to do was delete these orphaned files!

This however was much easier to say than to do. Because Veeam wasn’t detecting them, I couldn’t use the VBR interface to just select them and delete them from disk. The StoreOnce 4.x WebUI includes the option to list the items in the Catalyst store, and delete them. Unfortunately, it only allows you to select one item at a time, then click delete, and then click through an “are you sure” warning. All told, it probably takes about 8 to 11 seconds per item to delete it, then you need to navigate through the items list again to find the next aged item and repeat this process. This is fine if you only have a handful of items you need to delete. I had somewhere beyond 5800 items to cleanup!

I recalled that HPE offers a tool called “HPE StoreOnce Catalyst Copy Utility”. It is specifically designed to be used to copy backup items to alternate StoreOnce appliances for safekeeping, delete backups that are obsolete or orphaned, and synchronize backup copies between a primary backup target and a disaster recovery site. It can be downloaded from the HPE Software Center (https://myenterpriselicense.hpe.com). What I found out though is the documentation with regards creating the credential file is a bit sparse, so I’m going to take the time explain how to actually use the tool here.

And as always before I begin:

Use any tips, tricks, or scripts I post at your own risk.

Once you have downloaded the tool from the HPE Software Center, run the installer and accept all the defaults. If you are on a Windows machine, this means it’s going to install to C:\Program Files\HPE\StoreOnce\isvsupport\HPE-Catalyst-CATTOOLS

The HPE StoreOnce Catalyst Copy Utility is strictly a console based app – there is no GUI at all. To get started, open an Administrative Command Prompt and navigate to C:\Program Files\HPE\StoreOnce\isvsupport\HPE-Catalyst-CATTOOLS\bin

The first thing you need to do is create an encrypted password file for your Catalyst store. To do this, you run:

StoreOnceCatalystCredentials.exe –add -u UserName –s StoreOnce_IP –o pass.txt

Note – the UserName is the username with permissions to the Catalyst Store, which may or may not be the same as the Admin password to the StoreOnce (in fact, from a security perspective, it should be totally different!). If you copy and pasted these command lines, take note that your browser may replace the double dash with a single dash causing the commands to fail.

(You’ll also note that some of my screenshots are blurred and some are not… I got side tracked in the middle of writing this and became lazy since there really isn’t anything here that is secret anyways).

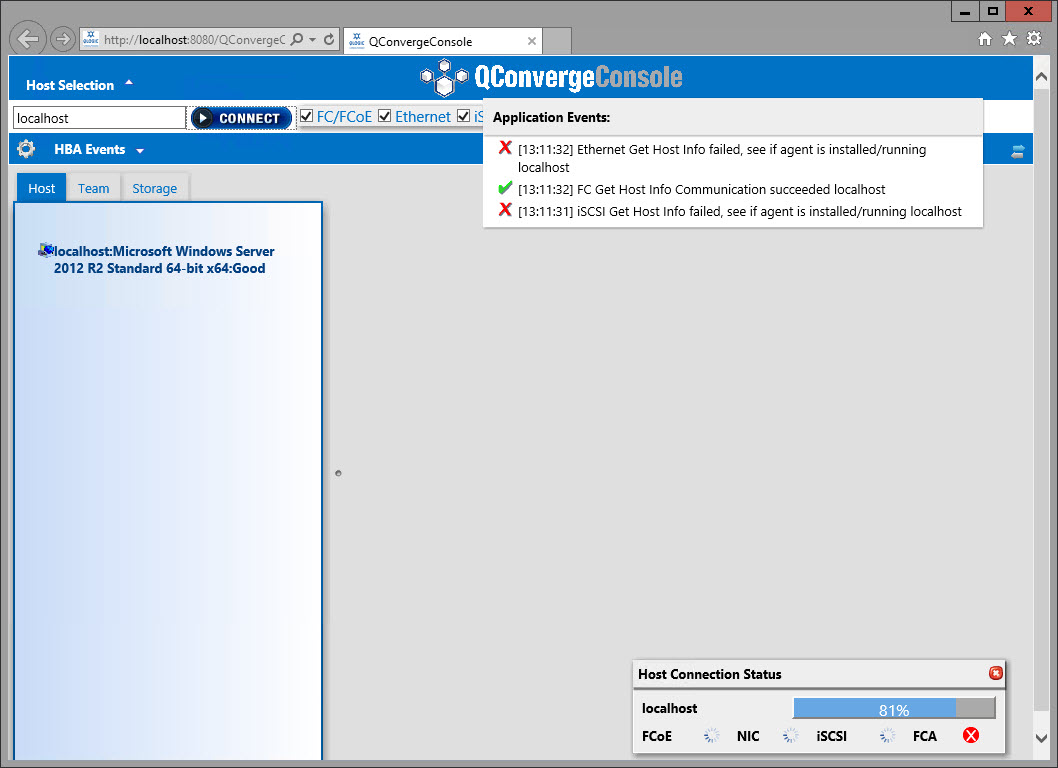

Now that we have our password, lets make sure can connect to the Catalyst Store. To do this, run:

StoreOnceCatalystCopy.exe –list –origin “StoreOnce IP” –origin-store “CATALYST_STORE_NAME” –username “USERNAME” –password-file pass.txt

You should get a summary back similar to below that shows the current Catalyst Copy Jobs status.

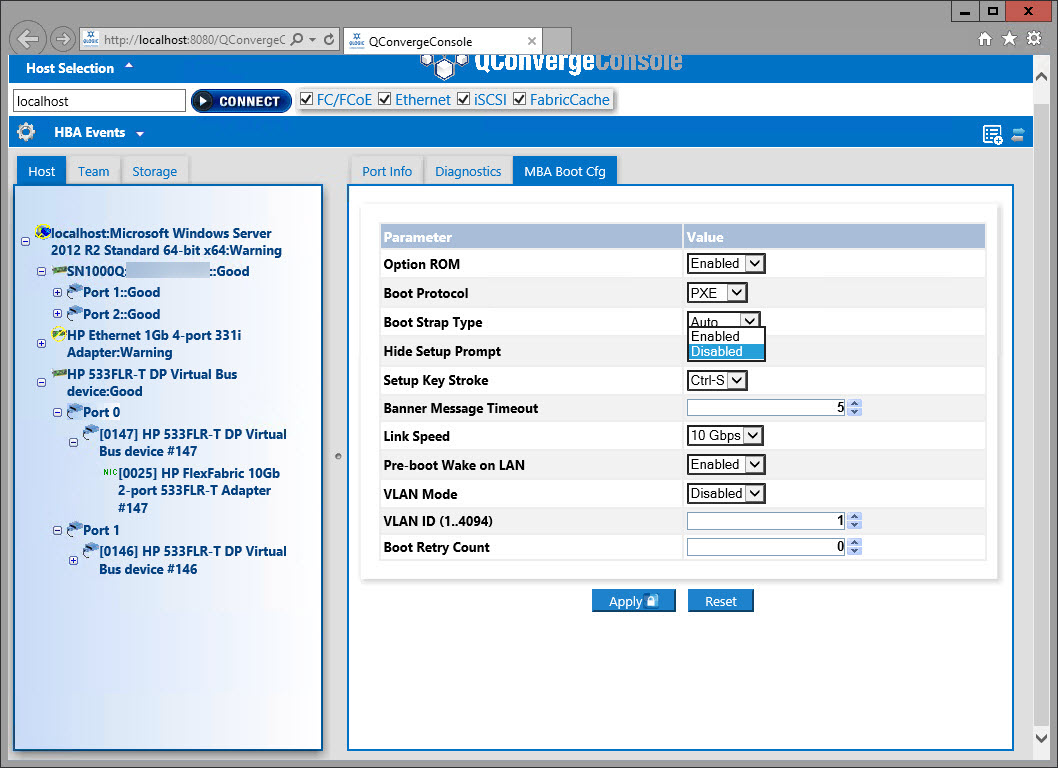

Back in the WebUI, I’ve filtered by “create date” to find those really old orphaned backups. In my example here, I’m going to remove all the files created prior to May 24 (which is 5 files in this example – and will also break the Veeam backup chain for a couple of them – just something to keep in mind!)

To delete these files with HPE StoreOnce Catalyst Copy Utility, the syntax is:

StoreOnceCatalystCopy.exe –delete-items –filtercreateddaterange [dd/mm/yyyy-hr:mm:ss]:[dd/mm/yyyy-hr:mm:ss] –origin “StoreOnce_IP” –origin-store “CATALYST_STORE_NAME” –username “USERNAME” –password-file pass.txt –force

So in my case I’m going to delete everything created between January 1, 2018 and May 24, 2020, so it would be:

StoreOnceCatalystCopy.exe –delete-items –filtercreateddaterange [01/01/2018-00:00:00]:[24/05/2020-00:00:00] –origin “192.168.99.29” –origin-store “VEEAM01” –username “dcc” –password-file pass.txt –force

As you can see, the HPE StoreOnce Catalyst Copy Utility has removed the 5 files older than May 24, 2020. It took only a few seconds in total.

And these deletions are now reflected in the WebUI once I refresh it.

For a full list of the options, advanced filters, and settings related to the HPE StoreOnce Catalyst Copy Utility, be sure to download the user guide from the same page you downloaded the utility from at the HPE Software Center.

And the 5800+ items I had to purge? It was around 294 TiB of capacity and it took a little under 2 hours to complete with this method. The StoreOnce Housekeeping Space Reclamation process is working away at reclaiming all that capacity now.

You must be logged in to post a comment.